Activation functions play a vital role in the realm of deep learning. They’re like the secret sauce that adds flavor and complexity to our neural networks. Think of them as the superheroes that bring life to our models, enabling them to learn intricate patterns and make mind-blowing predictions.

Neural networks are all about making connections and processing information. But without activation functions, these connections would be nothing more than linear operations. Activation functions swoop in to save the day by introducing non-linearity, which is crucial for capturing the intricate relationships hidden within our data.

In this tutorial, you’ll learn why activation functions matter, what activation functions are available in PyTorch, and when to use some of the most common ones.

Table of Contents

Understanding the Need for Activation Functions in Deep Learning

Activation functions are what allow a neural network to move from linear transformations to non-linear transformations. This means that the model can learn much more intricate patterns.

In short, activation functions provide the following benefits to neural networks:

- Introducing non-linearity: without activation functions, neural networks would be limited to linear transformations. Much of the world’s data is non-linear and activation functions open up significant additional opportunities.

- Enhancing Gradient Flow: During the training process, activation functions affect the flow of gradients through the network, impacting how effectively the model learns from data. Certain activation functions, like ReLU and its variants, alleviate the vanishing gradient problem by allowing gradients to flow more easily and preventing their saturation. This promotes more stable and efficient training, leading to faster convergence and better performance.

- Handling Output Range: Activation functions also ensure that the outputs of neurons or layers fall within desired ranges. For instance, sigmoid and tanh functions confine their outputs between 0 and 1 or -1 and 1, respectively, which can be advantageous in specific scenarios, such as binary classification or encoding probabilities.

Want to dive into deep-learning in Python? Check out my in-depth guide to implementing deep learning models in PyTorch.

Top Activation Functions Available in PyTorch

PyTorch provides a lot of different activation functions. However, there a few that are so performant that you’ll encounter them in most deep-learning projects. The table below highlights the main activation functions available in PyTorch:

| Activation Function | Description | When to Use |

|---|---|---|

| Sigmoid | Squashes the output between 0 and 1. | Binary classification tasks, probabilistic interpretation of output. |

| ReLU | Sets negative values to zero and keeps positive values unchanged. | Most commonly used activation function in deep neural networks. Alleviates the vanishing gradient problem. |

| Leaky ReLU | Similar to ReLU but allows a small negative slope for negative inputs. | When you want to prevent the dying ReLU problem. |

| Tanh | Squashes the output between -1 and 1. | Recurrent neural networks (RNNs), when you need centered outputs. |

| Softmax | Converts a vector of real values into a probability distribution over classes. | Multi-class classification problems. Ensures the sum of all probabilities is 1. |

Let’s now dive into what these functions look like and how you can implement them in PyTorch.

Implementing the Sigmoid Activation Function in PyTorch

A sigmoid function is a function that has a “S” curve, also known as a sigmoid curve. The most common example of this is the logistic function, which is calculated by the following formula:

Let’s implement the sigmoid function in NumPy to see what it looks like in Python.

import matplotlib.pyplot as plt

import torch

import torch.nn as nn

sigmoid = nn.Sigmoid()

x = torch.arange(-10, 10, 0.001)

y = sigmoid(x)

plt.plot(x, y)

plt.xlabel('x')

plt.ylabel('sigmoid(x)')

plt.title('Understanding the Sigmoid Activation Function');In the code block above, we implemented the Sigmoid function using PyTorch. The function is instantiated using the nn.Sigmoid class. From there, we can pass in a PyTorch tensor (our variable x), which is then transformed.

Implementing the code above returns the following visualization:

We can see that the function squishes values between 0 and 1. Very negative values are closer to 0, while very positive numbers are closer to 1.

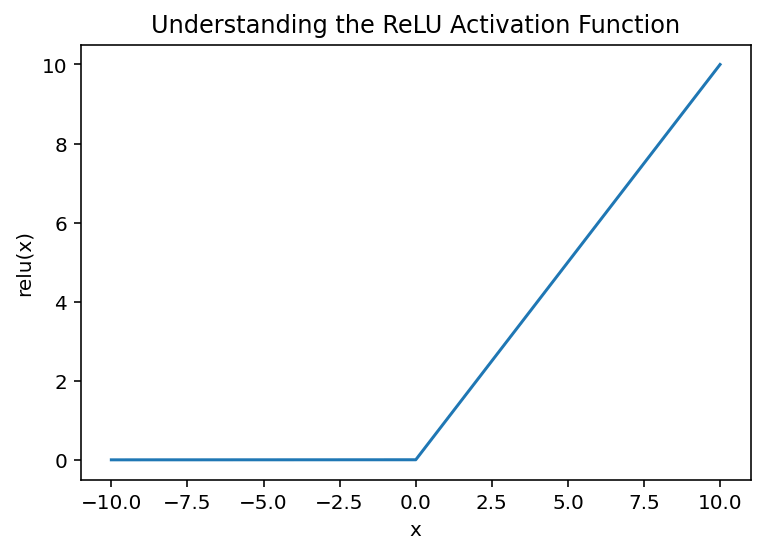

Implementing the ReLU Activation Function in PyTorch

The rectified linear unit (ReLU) function is a function that returns the max of 0 or a given input. The formula is written as below:

Because of its unique shape, the activation function is very helpful when you want to avoid the vanishing gradient problem.

The vanishing gradient problem refers to a phenomenon that occurs during the training of deep neural networks when the gradients calculated during backpropagation become extremely small as they propagate backward through the network layers. This problem can hinder the learning process and result in slow convergence or even the inability of the network to learn effectively.

ReLU (Rectified Linear Unit) helps mitigate the vanishing gradient problem by addressing the issue of gradient saturation. ReLU sets negative input values to zero and keeps positive values unchanged. By avoiding the saturation of gradients for positive inputs, ReLU allows the gradient to flow more freely during backpropagation. The derivative of ReLU is either 0 or 1, providing a consistent gradient value for positive inputs.

Let’s see how we can implement the ReLU function in PyTorch:

import matplotlib.pyplot as plt

import torch

import torch.nn as nn

relu = nn.ReLU()

x = torch.arange(-10, 10, 0.001)

y = relu(x)

plt.plot(x, y)

plt.xlabel('x')

plt.ylabel('relu(x)')

plt.title('Understanding the ReLU Activation Function');In the code block above, we instantiated the ReLU function using the nn.ReLU class. We then created a tensor and passed it into the function to transform it. Implementing the code above returns the following visualization:

In the following section, you’ll learn how to implement an alternative function to the ReLU – the leaky ReLU function.

Implementing the Leaky ReLU Activation Function in PyTorch

Implementing the Leaky ReLU activation function can be beneficial for addressing the “dying ReLU” problem and providing better performance in certain scenarios. Leaky ReLU is a variation of the ReLU activation function that introduces a small negative slope for negative inputs, allowing a small gradient flow even for negative values.

The dying ReLU problem occurs when ReLU units become “dead” and stop learning due to always outputting zero for negative inputs. By using Leaky ReLU, which introduces a small negative slope, you can prevent this issue and ensure that the neurons remain active even for negative inputs, promoting better gradient flow and learning.

Let’s see how we can implement the leaky ReLU function in PyTorch:

import matplotlib.pyplot as plt

import torch

import torch.nn as nn

relu = nn.LeakyReLU()

x = torch.arange(-10, 10, 0.001)

y = relu(x)

plt.plot(x, y)

plt.xlabel('x')

plt.ylabel('leaky relu(x)')

plt.title('Understanding the Leaky ReLU Activation Function');In the code block above, we instantiated the leaky ReLU function using the nn.LeakyReLU class. We then created a tensor array and passed it into the function.

Implementing the code above returns the following visualization:

Let’s now turn our attention to the Tanh activation function, an alternative to the sigmoid function.

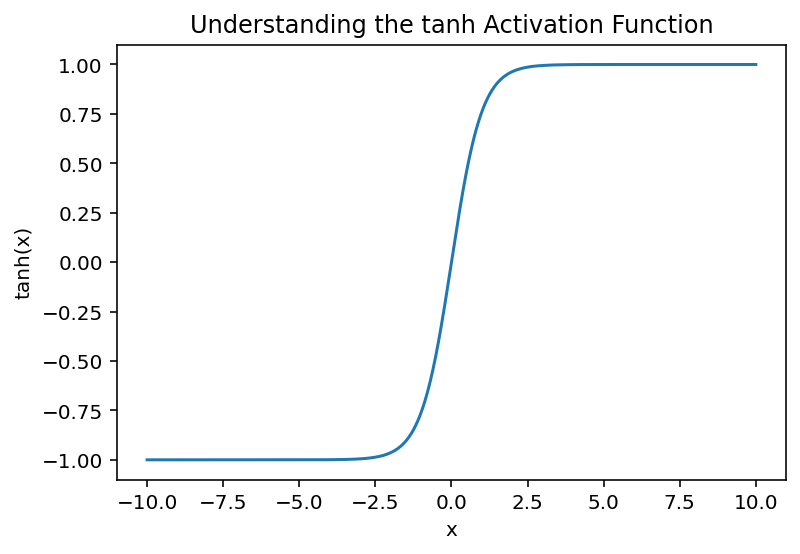

Implementing the Tanh Activation Function in PyTorch

The Tanh activation function is an important function to use when you need to center the output of an input array. Because the function squishes values between -1 and +1, the tanh function can be a good option.

The tanh function is a smooth and continuous function, meaning that its derivatives are well-defined and continuous. This property can facilitate gradient-based optimization algorithms like backpropagation, leading to more stable and efficient training of neural networks.

Similarly, the tanh function is similar to the sigmoid activation function in terms of its S-shaped curve. However, tanh is a scaled and shifted version of the sigmoid function, with outputs ranging from -1 to 1 instead of 0 to 1. This sigmoid-like behavior allows tanh to capture non-linear relationships while avoiding some of the saturation issues associated with the sigmoid function.

Finally, the tanh function can be used as a gating mechanism in recurrent neural networks (RNNs). By combining the tanh activation function with appropriate gating mechanisms like the LSTM (Long Short-Term Memory) or GRU (Gated Recurrent Unit), RNNs can capture long-term dependencies and effectively model sequential data.

Let’s now take a look at how to implement the tanh function in PyTorch:

import matplotlib.pyplot as plt

import torch

import torch.nn as nn

tanh = nn.Tanh()

x = torch.arange(-10, 10, 0.001)

y = tanh(x)

plt.plot(x, y)

plt.xlabel('x')

plt.ylabel('tanh(x)')

plt.title('Understanding the tanh Activation Function');Implementing the code above returns the following visualization:

Let’s now take a look at the final function that we’ll review in this tutorial, the softmax activation function.

Implementing the Softmax Activation Function in PyTorch

The softmax activation function is commonly used in neural networks, especially in multi-class classification tasks.

The softmax function outputs a probability distribution over multiple classes. It takes a vector of arbitrary real numbers as input and normalizes them to produce probabilities that sum up to 1. This property makes softmax suitable for tasks where you want to assign probabilities to mutually exclusive classes or make predictions based on class probabilities.

Let’s see how we can implement the function in PyTorch:

import matplotlib.pyplot as plt

import torch

import torch.nn as nn

softmax = nn.Softmax(dim=0)

x = torch.tensor([2.0, 1.0, 0.5])

probabilities = softmax(x)

plt.bar(range(len(x)), probabilities)

plt.xticks(range(len(x)), x)

plt.xlabel('Input')

plt.ylabel('Probability')

plt.title('Softmax Probabilities')

plt.show()Implementing the code above returns the following visualization:

You can see here that the values have been turned into probabilities, allowing us to better understand multi-class classification problems.

Frequently Asked Questions (FAQ)

Activation functions play a vital role in neural networks by introducing non-linearity into the model. Non-linearity enables neural networks to learn and represent complex relationships between inputs and outputs. Without activation functions, neural networks would essentially be limited to linear transformations, severely restricting their ability to capture and model intricate patterns in data. Activation functions allow neural networks to approximate non-linear functions, enabling them to solve a wide range of real-world problems, including image classification, natural language processing, and more.

Several activation functions are commonly used in deep learning. Some of the most popular ones include:

ReLU (Rectified Linear Unit): ReLU sets negative inputs to zero and keeps positive inputs unchanged. It is widely used due to its simplicity, computational efficiency, and effectiveness in mitigating the vanishing gradient problem.

Sigmoid: The sigmoid activation function maps input values to a range between 0 and 1. It is often used in binary classification tasks where the goal is to predict a probability.

Tanh (Hyperbolic Tangent): Tanh also maps input values to a range between -1 and 1. It is useful when centered outputs or capturing both positive and negative relationships are desired.

Softmax: Softmax is primarily used in multi-class classification tasks. It computes probabilities for each class, allowing the network to make predictions based on class probabilities.

The Rectified Linear Unit (ReLU) activation function is widely used in deep learning. Its primary purpose is to introduce non-linearity into the network, allowing neural networks to learn and model complex relationships in the data. ReLU sets negative inputs to zero and keeps positive inputs unchanged. This simple thresholding operation allows ReLU to preserve positive values and discard negative values, effectively activating or deactivating neurons based on the input.

Conclusion

In this tutorial, you learned about activation functions in deep-learning. You first learned why activation functions are important. Then, you learned about five of the most important activation functions used in deep learning. Finally, you learned how to implement each of these functions in PyTorch and how to visualize them.

To learn more about the ReLU function, check out the official documentation here.